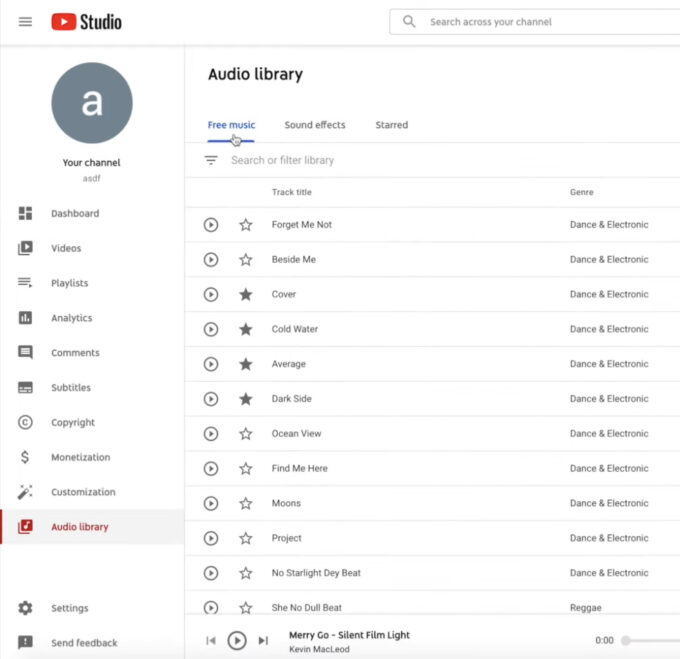

YouTube Analytics Added New Traffic Sources Report With the new metric, you can see how users find your videos. The new report is located in the Analytics tab in YouTube Studio. The report is titled “How viewers find your video,” and ...

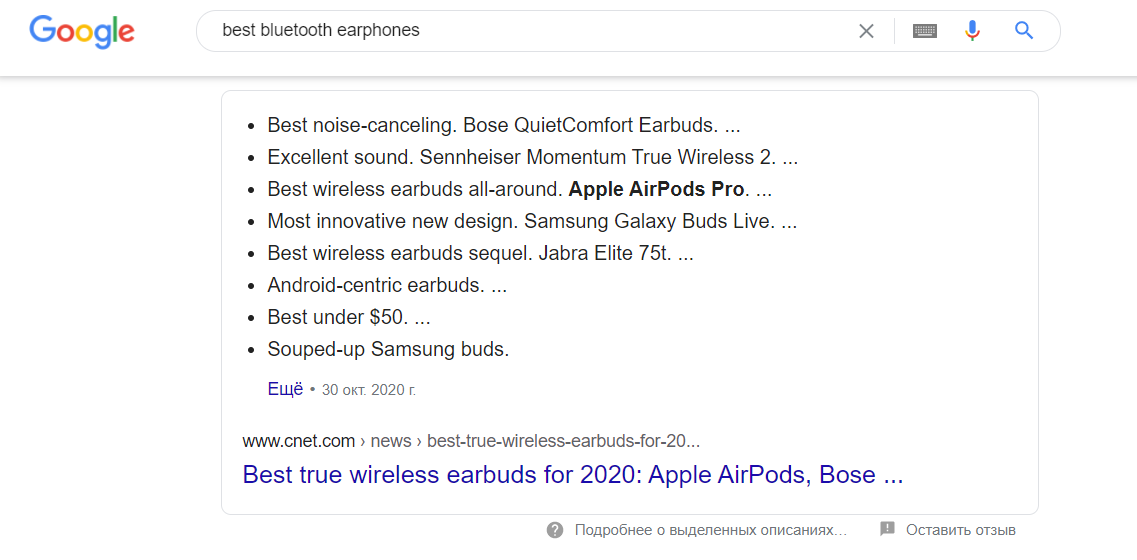

Google has started highlighting featured results in bold A new kind of issue can be seen in extended snippets. Usually Google uses bold to highlight keywords in snippets. But now this issue has begun to highlight products in extended snippets. As ...

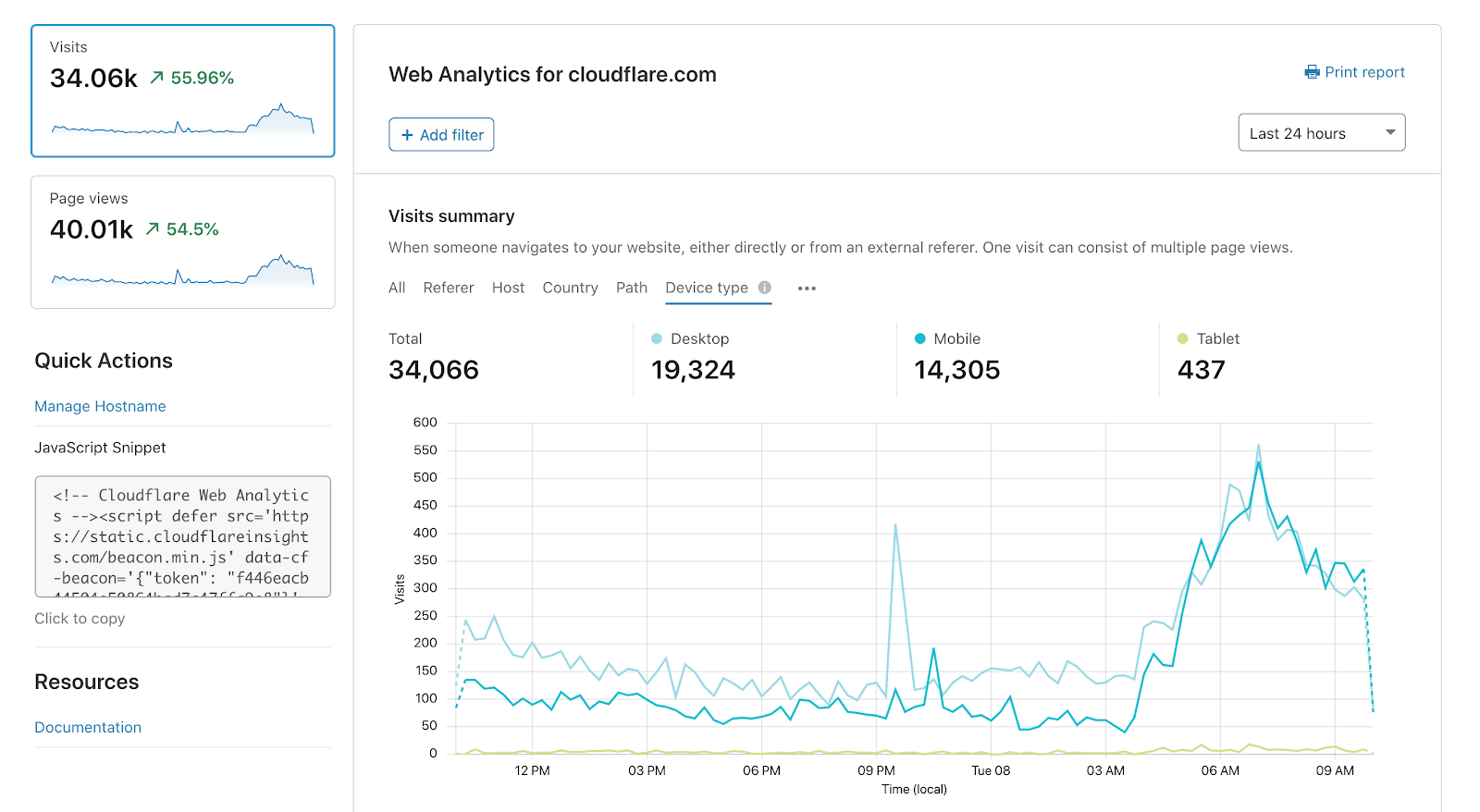

How to speed up your website: a selection of working methods How to increase website loading speed, reduce weight and prepare it for Google Core Vitals. We will deal with image optimization and new parameters. Collected the most important and detailed ...

Google spoke about how it detects duplicate content and conducts canonicalization The developers talked about this in the new episode of the Search Off The Record podcast. Google employees John Mueller, Martin Splitt, Gary Ilsh, and Lizzie Harvey have elaborated ...

What is robots.txt: the basics for newbies What is robots.txt: the basics for newbies Successful indexing of a new site depends on many components. One of them is the robots.txt file, which any novice webmaster should be familiar with correctly. Updated material ...

Anchor Text SEO Guide What are link anchors and how to choose anchor text for SEO in 2020. In this guide, we will understand what anchor text is and how you can work with link anchors so that it is ...

Dogpile search engine Dogpile search engine Dogpile is an meta search engine for information over the World Wide Web that attracts effects from Google, Yahoo!, Yandex, Bing, along with other popular search engines, such as individuals from audio and video content providers like Yahoo!. What ...

Facebook Page SEO Optimization for Business Optimizing website is not a hard task if one website follows the requirements of the search engine. Same thing goes for Facebook too. You need to optimize your Facebook page to get nice results ...